My last post was about my new server: HomeLab Stage XLVII: New Hardware. Why so much new power? The answer is Nvidia! Nearly every of my customers are looking into the solutions from Nvidia. Most of them starting with the vGPU technology, some have Nvidia Networking (Mellanox) stuff running and some are using their AI / Machine Learning / Deep Learning configuration. But, their are so many more use cases available.

Nvidia vGPU (Tesla T4):

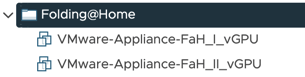

I am using Nvidia GPUs since severals years, starting point was gaming for sure, but nowadays my main focus is VDI / Terminal-Services. That solution is absoluely useful when running Windows 10 or Server 2016/2019 RDSH instances. I have configured it inside my new main cluster the Dell XR2:

3 of my 4 servers are equipped with a Nvidia T4 GPU and several VMs are configured with the vGPU setup.

I am using the T4-2Q profile for each of my VMs, to make it possible to migrate them around (because my T4s have only one GPU, so I cannot mix the Nvidia profies).

I have configured two linux based VMs for the Nvidia license server:

Some of my customers are using the vGPU technology as a strating point for machine learning workloads. Why not? I have also configured some of my VMs for machine learning:

OK, that´s the vGPU part, but that´s not all about Nvidia inside my environment:

Dynamic Direct Path I/O (Quadro P4000):

I am using two Nvidia Quadro P4000 GPUs inside my Dell VRTX chassis, those are configured for the new vSphere 7.x feature called Dynamic Direct Path I/O.

Both P4000 GPUs are configured within the VRTX chassis for PCIe passthrough.

Each VM is configured with the alias of the Dynamic Direct Path I/O:

Inside the VMs are the native Nvidia drivers installed. Everything is running fine including vSphere HA. Only vMotion and DRS are not working with that solution. This environment can be used for any of the Nvidia use cases. CAD, Graphics or machine learning.

Bitfusion Cluster (Tesla P4):

Withn the last episode I also created a new cluster based of 4 x Dell R730. Each of these nodes is equipped with one Nvidia P4 GPUs. This cluster will be used for the VMware Bitfusion technology:

The servers communicate via 10GbE, one link for vMotion / vSAN and the other one for the Bitfusion High-Speed Networking.

The Bitfusion Server VMs are configured with DirectPath I/O (PCIe pass-through) and the Bitfusion Client VMs could run inside any of my clusters and access the GPUs via LAN.

vSGA / vDGA (Tesla P4):

I am using 2 x Nvidia Tesla P4 on my Fujitsu cluster for vSGA or vDGA workloads.

What is the difference between the solutions?

Inside the ESXi hypervisor is a Nvidia driver installed at both solutions, but with vSGA you don´t install any special driver inside the VMs and you don´t need a Nvidia license. You have a limited features set of GPU instructions available and only a limited support from 3rd party vendors.

vGPU offers the best combination of performance and features for multiple VMs. You need a vGPU enabled GPU and of course licenses.

vDGA is nothing else than PCIe-passthrough (DirectPath I/O). One VMs is using the full power and features of that GPU, but only one!

High-End Workstation (RTX8000):

I have created a dedicated blog post about my new high-end workstation including two Nvidia RTX8000 GPUs connected via NVlink:

Check out the post here: HomeLab Stage XLV New Monster Workstation

Actually I am looking at the VMware Project VXR solution to use my RTX8000 GPUs inside one of my existing vSphere / vSAN clusters:

Stay tuned for news about that awesome setup!

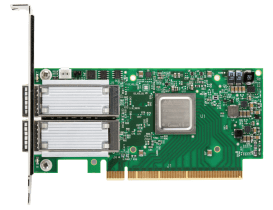

Nvidia Networking (ConnectX-3, 4 and 5):

I am using Mellanox NICs since several years inside my HomeLab and also at customer projects. When creating my 40GbE environment I found ConnectX-3 Pro Dual Port NICs very cheap on the internet and they working without any problems. I re-flashed the OEM firmware with the original Mellanox version without issues….

My high-end workstation is equipped with an ConnectX-4 LX card. Single port 40GbE with an optical gbic for LC connections to my datacenter. The ConnectX-3 cards don´t deliver enough power for the optical gibc….

Inside my newest cluster (Dell XR2) each server is equipped with a Dual Port ConnectX-5 card supporting an outstanding speed. More on that on a dedicated blog post… 🙂

Actually I am using for most high-performance connections the cables from Nvidia Networking (Mellanox LinkX). I try to use most Direct Attached Copper (DAC) as possible to save power on the switch side. Active Optical Cables (AOCs) and transceivers need more power than the DAC versions. I am also using some splitter cables to use one port on the switch side for multiple servers.

I really love to work with the different Nvidia solutions and my customers also!

Check out the next episode of my journey here: Dell + VMware Integrations